AI Metrics for CFOs: The Four-Layer Framework Beyond Tokens and Seats

Key takeaways

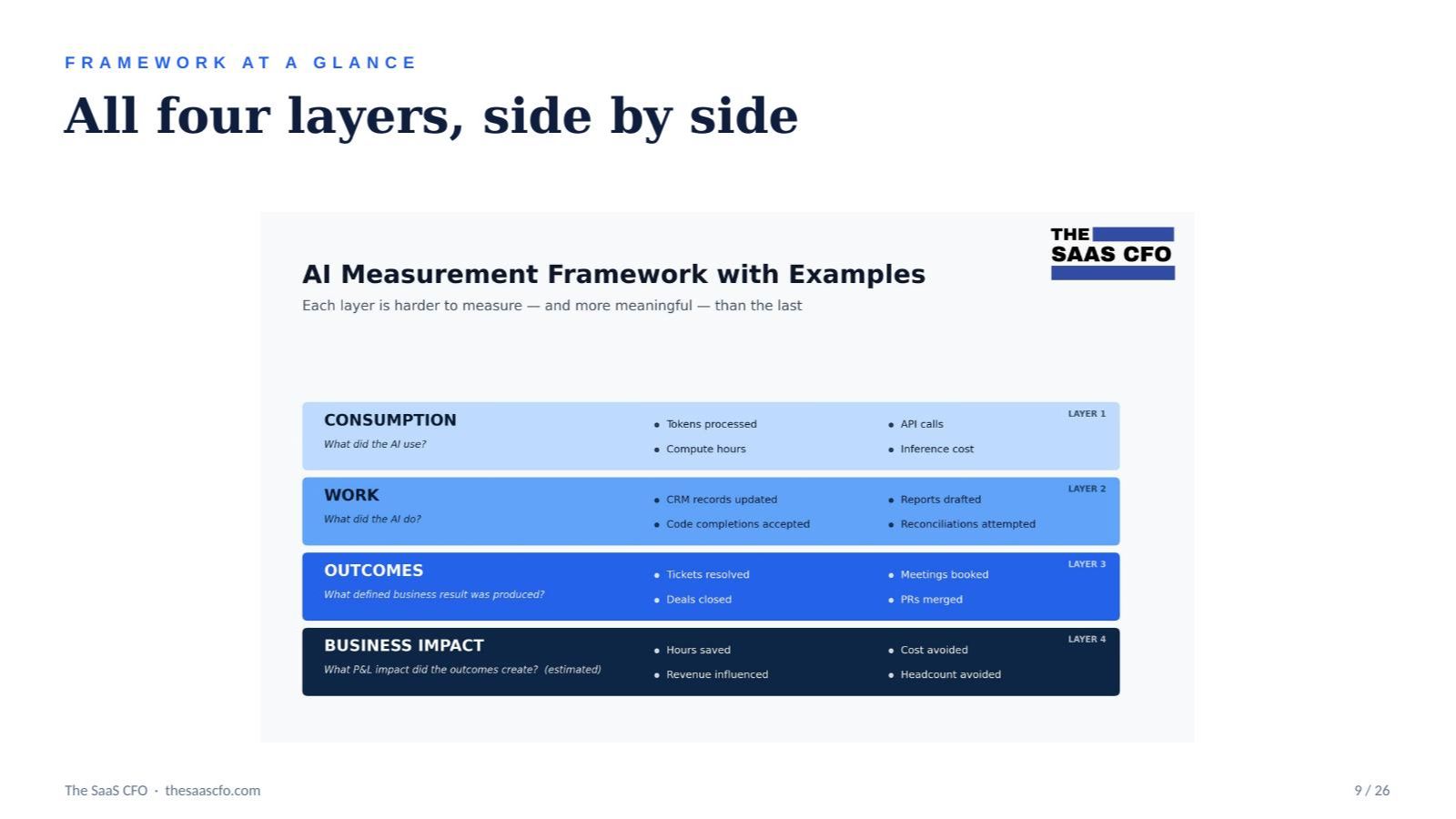

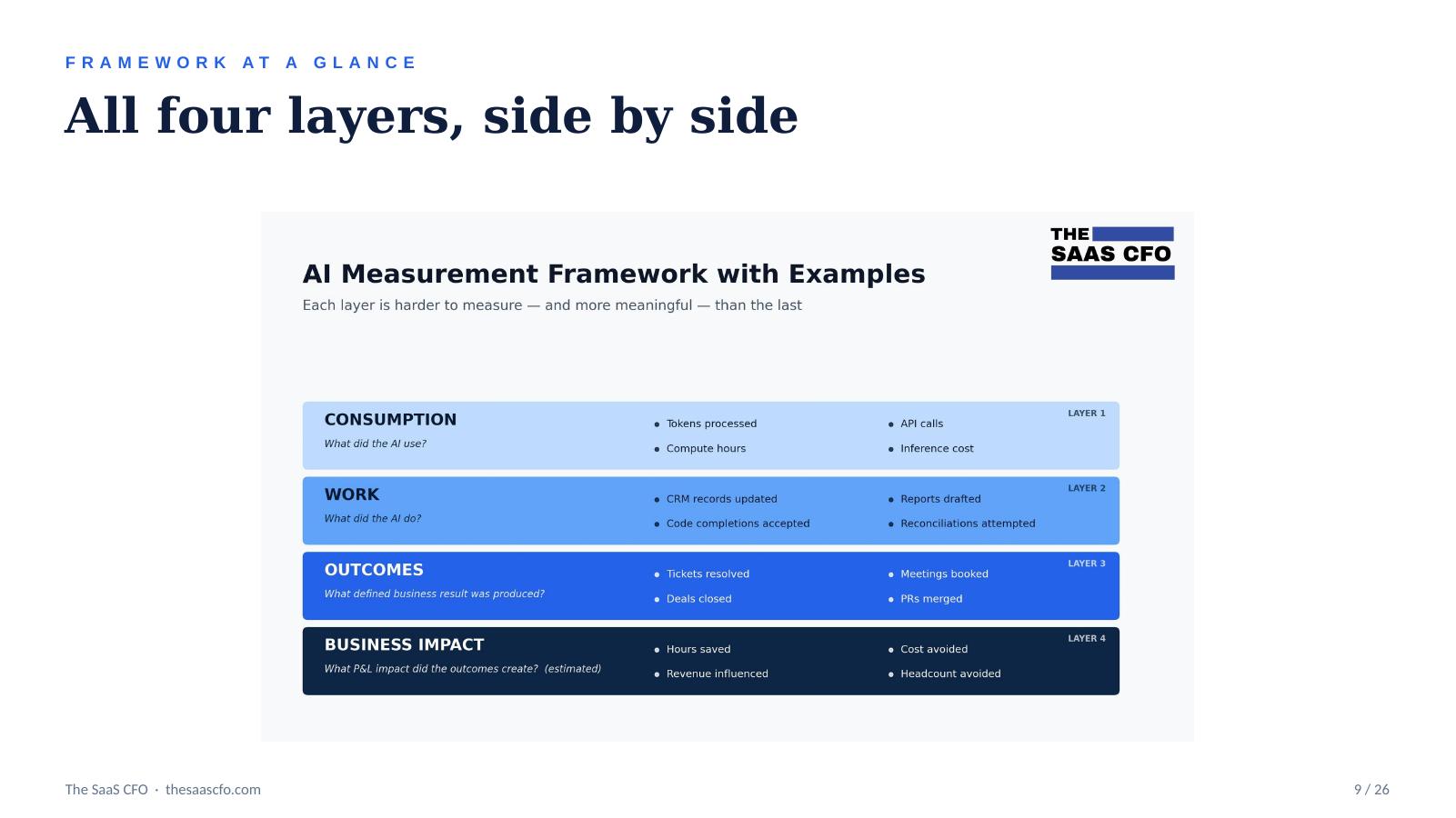

- AI measurement breaks into four layers: Consumption → Work → Outcomes → Business Impact. Each layer is harder to measure — and more meaningful — than the one below it.

- Tokens, seats, and DAUs sit below Layer 1. They're not business metrics. A CFO doesn't care that your AI processed 9 billion tokens last quarter. Yes, on the expense side, but we are beyond that now.

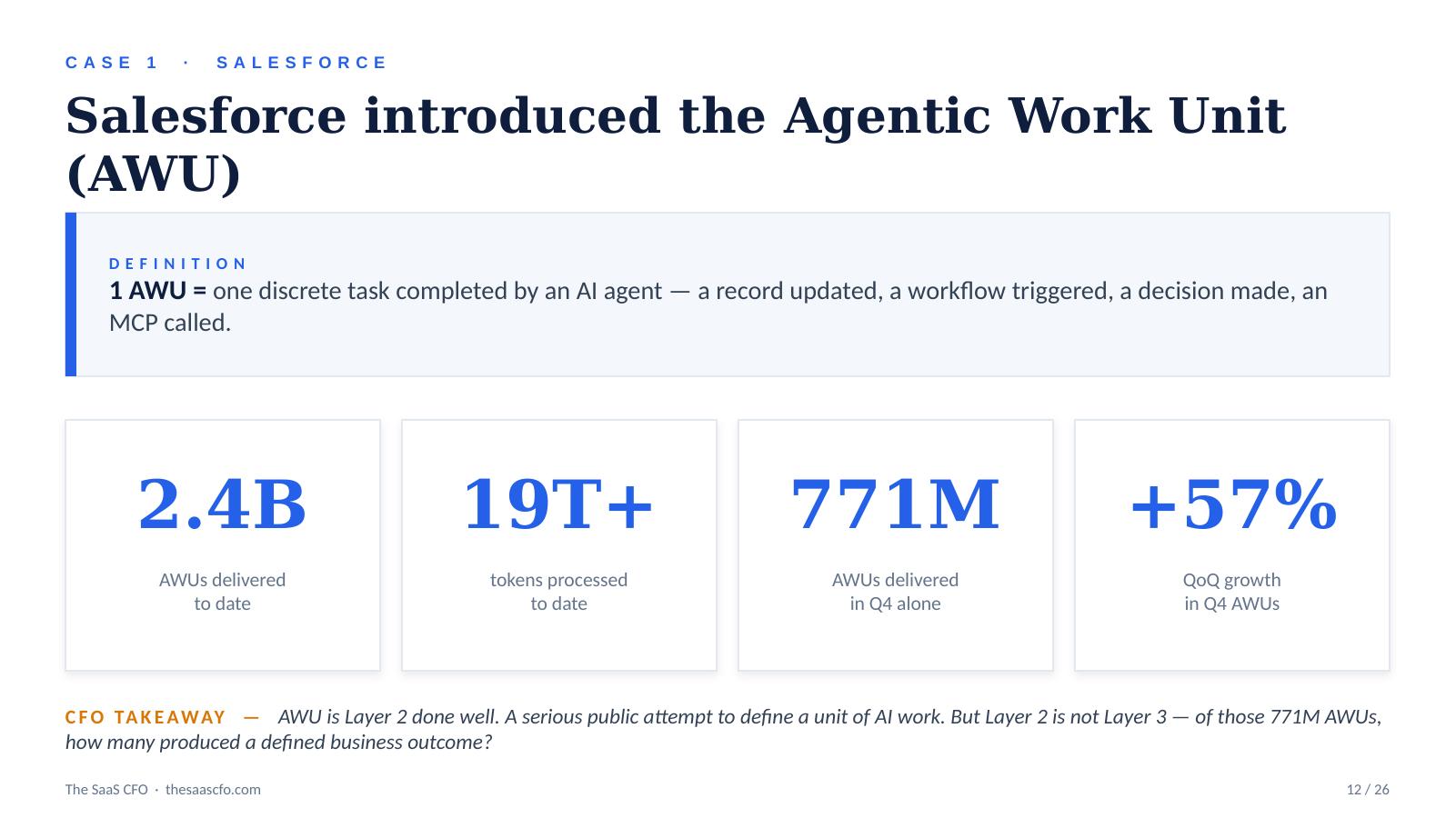

- Public tech companies setting the AI metrics framework: Salesforce's Agentic Work Unit, or AWU, is Layer 2; Intercom Fin's per-resolution pricing is Layer 3; ServiceNow's $100M internal savings claim is Layer 4.

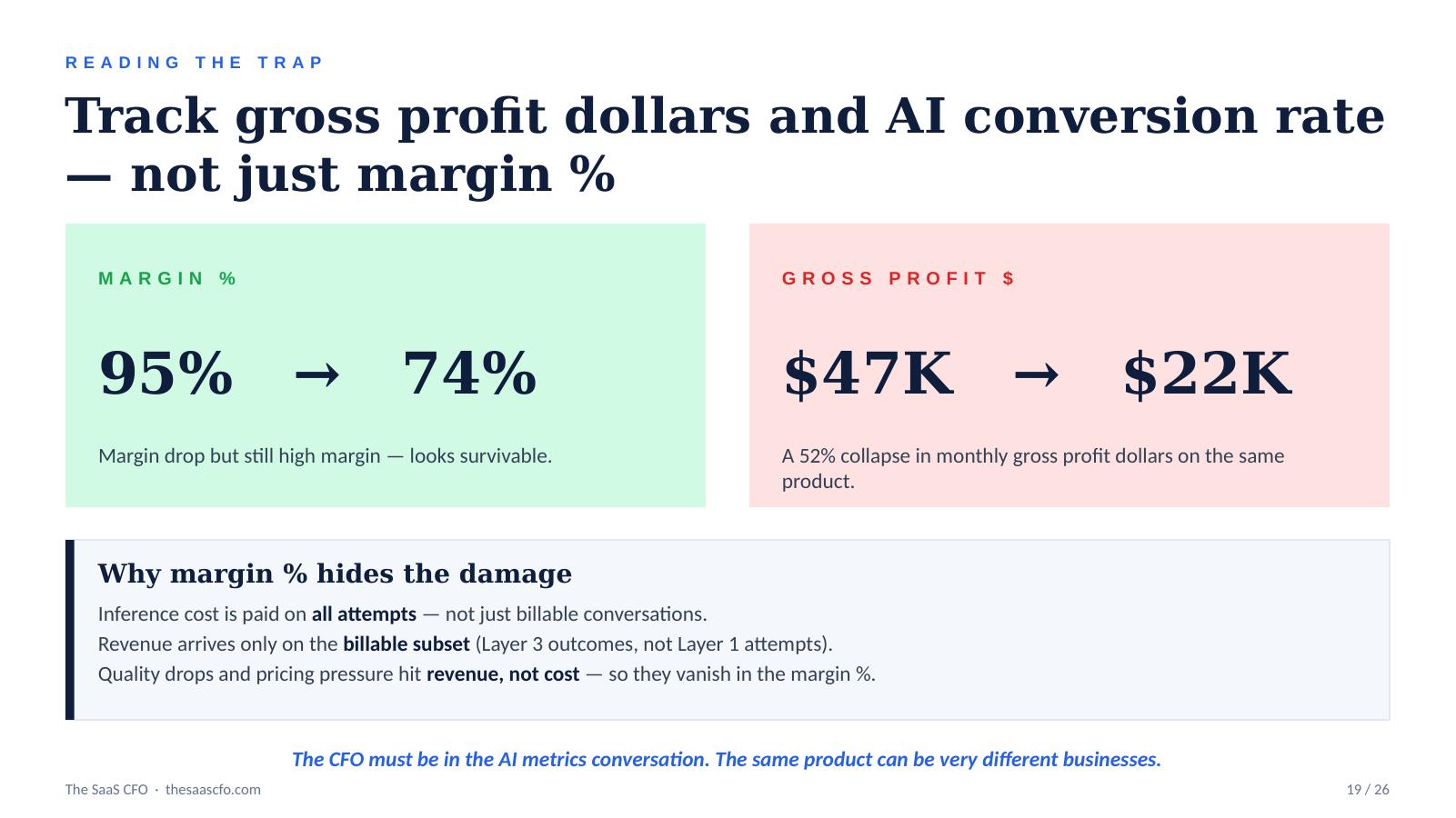

- A blended gross margin of 95% can drop to 74% — and gross profit dollars can collapse 52% — while the percentage still looks "fine." Measuring at the wrong layer hides the damage.

- Three things to do this week: pull a clean AI cost report, fill in a work-unit definition, and build a one-page AI metrics summary.

AI agents are doing real work now. They resolve support tickets, update CRM records, draft journal entries, review contracts, and ship code. Inference bills are growing faster than AI adoption. The org chart mix is shifting toward digital labor.

But most SaaS companies still can't report the work their AI is doing. The default metric became tokens. And tokens are not a business metric.

This is the measurement gap, and it's the single biggest reporting problem in SaaS today. It affects:

- Pricing — what you can credibly charge for

- Gross margin — inference cost vs. revenue, surfaced honestly

- Board reporting — what the AI actually achieved

- Customer ROI — your renewal and expansion narrative

- Investor diligence — the AI gross-margin questions that are about to be standard

If you're a SaaS CFO or founder trying to figure out which AI metrics matter and which ones are vanity in a trench coat, this framework is where to start.

The four-layer framework for measuring AI

Here are the four layers from infrastructure usage to business impact.

Layer 1: Consumption — What did the AI use?

Tokens processed. API calls. Compute hours. Inference cost.

Useful for cost management, especially when AI sits in COGS for revenue delivery. It's a necessary input to gross margin. Finance and product need it.

But it's not enough for customers, boards, or investors. "We processed 9B tokens" is a fact about your bill, not your business.

Layer 2: Work — What did the AI do?

CRM records updated. Reports drafted. Code completions accepted. Reconciliations attempted. Workflows triggered.

This is the right place to start. Layer 2 captures the countable actions the AI performed. You know the work happened. You don't yet know whether it mattered to your business or department.

This is the easiest layer to instrument, and the most underbuilt one. Most companies skip from Layer 1, tokens, to Layer 4, claimed business impact, without ever defining what work the AI is doing in between.

Layer 3: Outcomes — What defined business result was produced?

Tickets resolved. Demo meetings booked. Qualified leads created. Deals closed. PRs merged.

An outcome is a work unit verified to produce a defined business result. You can count it, and it has a clear business meaning. This is where outcome-based pricing lives.

It's also where most of the public AI pricing innovation is happening. Intercom Fin charges $0.99 per resolution, and Zendesk includes a fixed number of resolutions per agent per month.

Layer 4: Business Impact — What P&L effect did those outcomes create?

Hours saved. Cost avoided. Headcount avoided. Revenue influenced. Pipeline generated. Renewal lift. CSAT improvement.

This is the CFO endgame. And the Agentic AI endgame. It's what customers, boards, and investors actually want to see. But Layer 4 is estimated, not counted so the work isn't producing the number, it's defending the methodology that produces the number.

You can only credibly defend Layer 4 when it's anchored on Layer 3 outcomes that were anchored on Layer 2 work that was anchored on clean Layer 1 data.

Why the order matters

Each layer requires the layer below it to be true.

You cannot claim Layer 4 hours saved without Layer 3 resolutions. You cannot count resolutions without instrumented Layer 2 work. You cannot instrument work without clean Layer 1 data.

This is why the maturity curve looks like this:

|

Maturity |

Where they are |

|

Most companies |

Stuck at consumption — token charts only |

|

Better |

Building work-unit instrumentation |

|

Leaders |

Counting outcomes — and pricing on them |

|

The best |

Reporting business impact with defended methodology |

If you're showing your board a token chart, you're at the very bottom of this curve.

What public tech leaders are actually doing

The framework isn't theoretical. Public companies are setting the pace, because Wall Street analysts expect real ROI conversation to justify stock price targets.

Salesforce: the Agentic Work Unit, or AWU, is Layer 2 done well

Salesforce introduced the Agentic Work Unit in 2026. The definition: one AWU equals one discrete task completed by an AI agent; a record updated, a workflow triggered, a decision made, an MCP called.

The numbers Salesforce has disclosed:

- 2.4 billion AWUs delivered to date

- 19+ trillion tokens processed to date

- 771 million AWUs delivered in Q4 alone

- +57% quarter-over-quarter AWU growth in Q4

This is a serious public attempt to define a unit of AI work. It's Layer 2 done well.

But Layer 2 isn't Layer 3. Of those 771M AWUs, how many produced a defined business outcome? That's the question Salesforce hasn't fully answered yet, and it's the question every CFO needs to answer for their own AI features.

The Inference-to-work Ratio

If you put work units in the numerator and token counts in the denominator, you get an efficiency ratio. A rising ratio means your platform is getting more efficient; meaning, more work delivered per unit of inference. Track it monthly. It's one of the cleanest signals you can show a board.

ServiceNow: charge for Layer 1/2, defend Layer 4 internally first

ServiceNow runs a hybrid pricing model; seats plus AI usage entitlements, or Now Assist Packs. The disclosed numbers:

- Now Assist surpassed $600M ACV

- Net new ACV more than doubled YoY

- Deals over $1M nearly tripled QoQ

- One fast-food customer expanded Assist Pack entitlements 13x at renewal

Separately, ServiceNow CFO Gina Mastantuono disclosed roughly $100M in internal headcount-related savings from deploying ServiceNow's own AI products. That's Layer 4 and eating their own dog food to show business impact (layer 4).

The CFO takeaway: charge for Layer 1/2 consumption. Defend Layer 4 internally first before asking customers to defend their numbers.

Intercom Fin: resolution as the unit and the price

Intercom Fin charges $0.99 per resolved conversation, layered on a $49/month per-seat fee with 50 resolutions included.

What counts as a resolution:

- Customer confirms Fin's answer was satisfactory

- Customer exits without requesting further help after Fin's last answer

- Assumed resolution: auto-triggers after 24 hours of no response

- Reversed if the customer returns later with the same issue, meaning billing is undone

Definition first. Instrumentation second. Pricing third. If you can't count it, you can't price it.

Zendesk: same Layer 3 territory, different defense

Zendesk includes AI agent resolutions per agent per month — 5 for Team, 10 for Pro/Growth, and 15 for Enterprise with a 10K annual ceiling per account.

What counts: a customer issue resolved by an AI agent without human involvement, after a period of customer inactivity, where the customer either confirmed or did not return.

What's excluded: escalations to humans, negative customer feedback, test conversations, internal QA.

Define the unit. Set the exclusions. Tie it to a clear pricing structure before scaling.

Microsoft, cautionary: big numbers below the framework

Microsoft has disclosed:

- 15M paid Microsoft 365 Copilot seats

- +160% YoY seat growth

- 10x DAUs YoY

- 4.7M paid GitHub Copilot subscribers

These are real and impressive. They also sit below Layer 1.

Seats measure access. DAUs measure engagement. Neither tells you what the AI consumed, what it did, or what business result was produced. A user can have 30 Copilot conversations and produce nothing.

"AI engagement is up" stops being a satisfying answer in 2026.

The gross profit trap that hides in margin percentage

Here's the trap that catches finance teams who measure at the wrong layer.

Take a $0.99-per-resolution product, 50,000 conversations a month, and run four scenarios. The headline gross margin can drop from 95% to 74% — but margin percentage is still high enough to look survivable.

Now look at gross profit dollars: $47K → $22K. That's a 52% collapse in monthly gross profit dollars on the same product, in the same scenario where the percentage moved 21 points.

Why does margin percentage hide the damage?

- Inference cost is paid on all attempts, not just billable conversations

- Revenue arrives only on the billable subset, meaning Layer 3 outcomes, not Layer 1 attempts

- Quality drops and pricing pressure hit revenue, not cost so they vanish in the margin percentage

Track gross profit dollars and AI conversion rate, not just margin percentage. The CFO has to be in the AI metrics conversation. The same product can be very different businesses depending on how Layer 1 and Layer 3 connect.

What AI framework to build, in order

Six steps. Focus on the first three.

- Identify where AI costs sit. Token costs, vendor invoices, internal AI tooling. Get this clean in your chart of accounts and by vendor, product, and feature.

- Define one work unit. One. The one most central to your product's value and most countable in your data. Use a written template. Sign off in writing across product, finance, and customer success.

- Build the unit dashboard. Revenue, COGS, gross margin, volume, cost per unit, quality. Get one dashboard right before you build five.

Then, once those three are working:

- Connect work units to cost. Cost per resolution, tokens per work unit. Your unit-economics view.

- Connect work units to outcomes. Document assumptions, get cross-functional buy-in, improve quarterly.

- Build the data table behind it. Event-level detail per work unit. This is the boring foundation that makes the dashboard credible.

Five AI finance traps to avoid

- Confusing layers in your reporting. Tokens are not work. Work is not an outcome. Outcomes are not business impact. Treating one as the other is how AI reporting becomes ambiguous and how boards stop trusting your numbers.

- Ignoring quality. Resolution rate up, CSAT down, repeat contacts up; that's not improvement. That's moving the problem around. You need outcomes AND quality.

- Forgetting gross margin. If you price on outcomes but don't understand inference cost, you'll create a margin problem. Measure both sides.

- Overcomplicating the metric. ARR worked because everyone understood it. NRR worked because it told a clear story. If you have to explain your AI metric three times, it's the wrong metric.

- Not reconciling AI activity to billing. If you charge by outcome, finance needs confidence that billable events are complete and accurate. This is a quote-to-cash problem.

Three things to do this week

If you only do three things from this framework:

- Pull a clean AI cost report. Last three months. Vendor by vendor, product by product. Not a guess; an actual report from your accounting system paired with usage data. Most finance teams cannot produce this on demand. That's the first problem to solve.

- Fill in a work unit definition template. One unit. One paragraph per column: Trigger, Exclusion, Data Source, Billing Treatment, Quality Check, Owner. Get product, finance, and customer success to agree in writing.

- Build a one-page AI financial metrics summary. Even if the data is incomplete. AI revenue, AI COGS, AI gross margin, work units completed, cost per unit. The first version might be embarrassing. The act of producing it surfaces every data gap.

Within 18 months, this will become a standard. Customers and boards will demand ROI proof at the resolution, AWU, or workflow level. Pricing will move to outcomes. Investors will ask for AI gross margin and AI work output the way they ask for NRR today.

The AI is doing the work. It's your job to count it correctly.

AI Metrics FAQs

What's the difference between AI work units and AI outcomes?

A work unit is a countable action your AI performed, such as a record updated, draft created, or workflow triggered. An outcome is a work unit verified to produce a defined business result, such as a ticket resolved, lead qualified, or PR merged. That's Layer 2 vs. Layer 3 in this framework. Most companies need to instrument work units before they can credibly count outcomes.

Are tokens a useful AI metric for finance teams?

Tokens are useful internally for cost management. They're an input to gross margin. They are not useful for customers, boards, or investors.

What is Salesforce's Agentic Work Unit, or AWU?

An AWU is one discrete task completed by an AI agent; a record updated, a workflow triggered, a decision made, an MCP called. Salesforce has disclosed 2.4B AWUs delivered to date, 771M in Q4 alone, with +57% QoQ growth. It's a public attempt to define a unit of AI work and a good example of Layer 2 measurement.

What are good AI metrics to show a SaaS board?

Start with five: AI revenue, AI COGS, AI gross margin isolated from non-AI, inference efficiency ratio, and your top 10 most AI-expensive customers or cohorts. Tokens and seats alone won't satisfy a board in 2026.

How should we price AI features — per token, per query, or per outcome?

Per outcome, or Layer 3, is the strongest pricing position when you can credibly count and verify outcomes. Hybrid pricing, meaning a subscription floor plus usage overage, is the emerging standard for AI-enabled SaaS. Pure per-token pricing exposes customers to bill shock and rarely survives renewal.

This is from the first lesson in the AI Economics for SaaS Operators course. Next: Why AI Changes the SaaS P&L →

Ben Murray, The SaaS CFO, has worked in finance and accounting for 25+ years. He’s been a SaaS CFO for 10+ years and began his career in the FP&A function. He holds an active Tennessee CPA license and earned his undergraduate degree from the University of Colorado at Boulder and MBA from the University of Iowa. He offers coaching, fractional CFO services, and SaaS finance courses.