How AI Changes the SaaS P&L: A CFO's Guide to AI Gross Margin

Key takeaways

- Traditional SaaS gross margins of 70%+ are compressing towards 50% for AI-native businesses. Inference cost is variable. Every API call has a real, scaling cost.

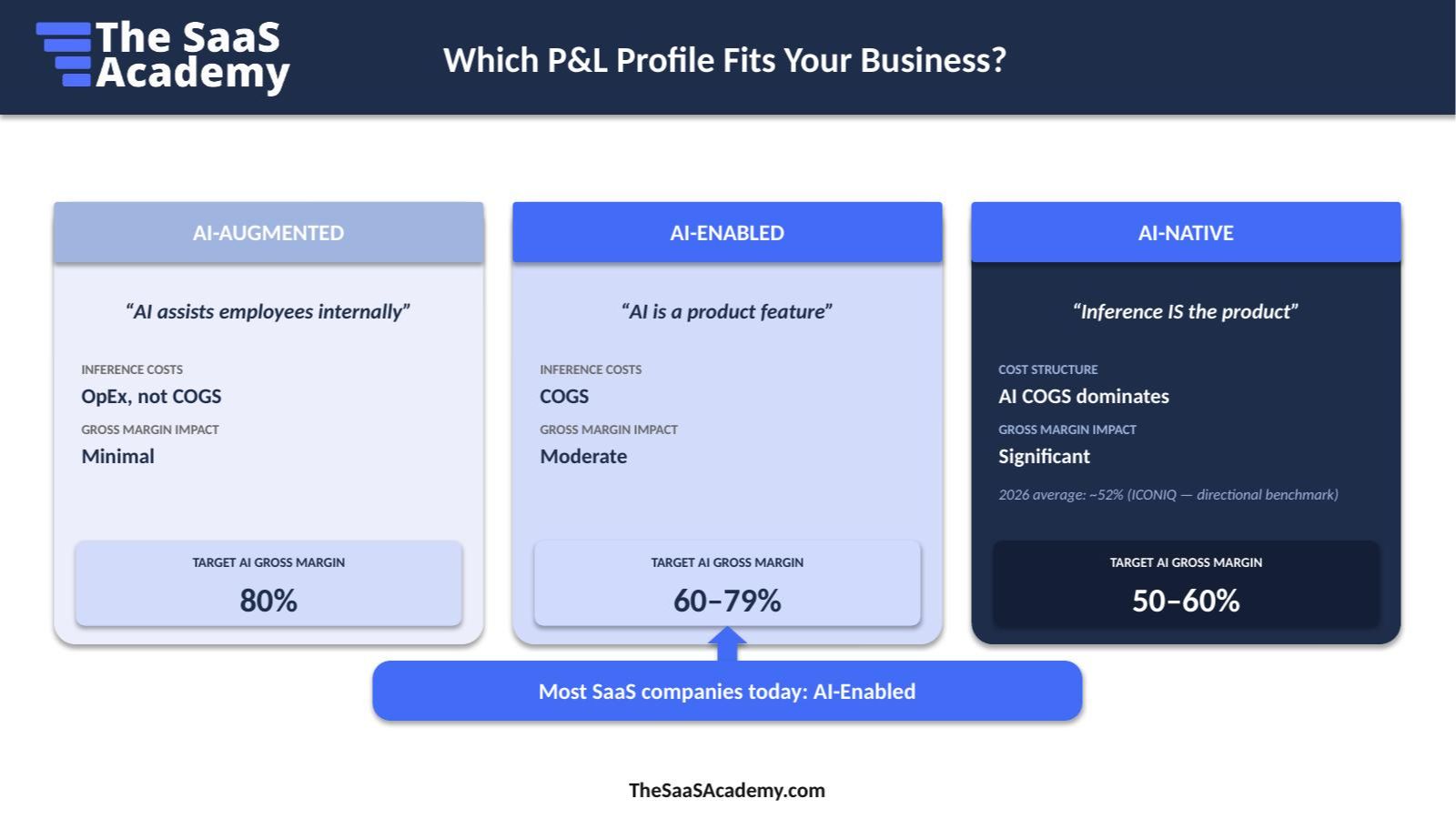

- There are three P&L profiles: AI-Augmented, with a target margin of 80%; AI-Enabled, with target margins of 60% to 79%; and AI-Native, with target margins of 50% to 60%. Most SaaS companies today are AI-Enabled.

- AI costs often hide in Cloud and Hosting, Software subscriptions, R&D, and Infrastructure. If COGS is wrong, every downline metric is wrong.

- GitHub Copilot moving to usage-based billing on June 1, 2026 is the canary. Flat AI subscriptions are under pressure when heavy users consume disproportionate compute.

- Five board questions are coming. This guide tells you how to be ready.

Your SaaS P&L was built for a world where COGS was mostly fixed. AI changed that.

For two decades, SaaS finance teams operated on a comfortable model: hosting and infrastructure scaled gradually, support and customer success were semi-fixed, and gross margins scaled to 70% to 80%. Add a new customer, and most of the cost was already baked. The marginal cost of revenue was small enough that gross margin barely moved.

Then your engineering team shipped an AI feature. And every user interaction now triggers a real, variable cost.

If you've added AI to your product, your board is about to ask questions your current financial dashboard was never built to answer:

- How much is AI really costing us?

- Are we pricing AI correctly?

- Which customers or features are destroying margin?

- What should we show the board every month?

This guide walks through how AI changes the SaaS P&L, what the new cost layers look like, and where to start.

The foundation doesn't change. The cost structure does.

If you've worked through the SaaS Metrics Foundation, you already know:

Revenue streams dictate your COGS structure.

COGS must be coded correctly because gross margin depends on it. And margins by revenue stream really depend on it.

Your dashboard is only as good as your data foundation.

Let’s be clear. None of that changes even with AI. AI just adds one new layer: variable inference costs that scale with every user interaction.

The difference matters more than it sounds.

|

Cost type |

Traditional SaaS COGS |

AI COGS |

|

Behavior |

Semi-fixed, such as DevOps, support, and CSM |

Variable. Every API call has a cost |

|

Scaling |

Step-function |

Linear or super-linear with usage |

|

Customer impact |

Largely uncoupled |

Directly coupled to usage |

Once a meaningful share of your COGS becomes variable inference, the rules of margin management change. You can no longer assume that a new customer is mostly margin. You need to know, by customer, by feature, and sometimes by user, what the cost to serve actually is.

Which AI P&L profile fits your business?

Not every SaaS company is affected the same way. Three profiles dominate today.

AI-Augmented: AI assists employees internally

You use AI tools internally, such as Copilot for engineers, AI summarization in finance, or ChatGPT for marketing. The AI doesn't touch your product.

Inference costs: OpEx, not COGS

Gross margin impact: Minimal

Target gross margin: roughly 80%

AI-Enabled: AI is a product feature

You've added AI features to a SaaS product. Customers trigger inference through normal product use.

Inference costs: COGS, because they are customer-facing

Gross margin impact: Moderate

Target AI gross margin: 60% to 79%

This is most SaaS companies in 2026.

AI-Native: Inference is the product

The product is the model. Every customer interaction is an inference call.

Cost structure: AI COGS dominates

Gross margin impact: Significant

Target AI gross margin: 50% to 60%

2026 average: roughly 52%, based on the ICONIQ directional benchmark report

The further right you sit on this scale, the more aggressively your P&L needs to expose AI costs as their own line item.

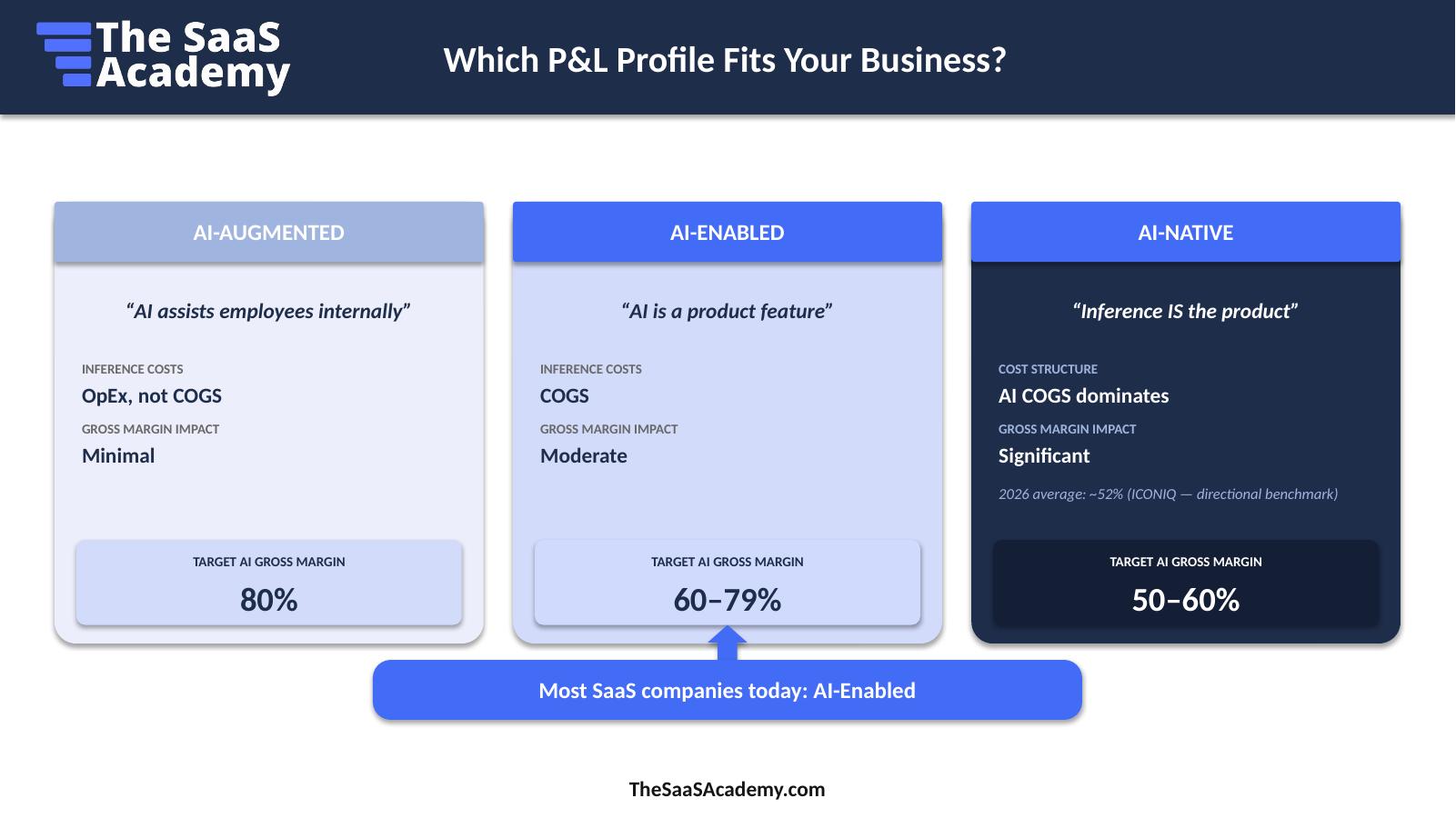

Where inference costs live in your SaaS P&L

The cleanest way to reconstruct your P&L is to stack the new layers explicitly:

Revenue

less Traditional COGS

less AI Inference Layer

less AI Infrastructure Layer

equals AI-Adjusted Gross Profit

AI Gross Margin = AI-Adjusted Gross Profit divided by Revenue

Traditional COGS includes DevOps, support, CSM, and services.

The AI Inference Layer includes model API fees, per-call inference, and self-hosted compute.

The AI Infrastructure Layer includes vector databases, model monitoring, and fine-tuning.

The AI Inference Layer is your model API fees: OpenAI, Anthropic, Gemini, self-hosted compute, and per-call costs.

The AI Infrastructure Layer is everything else customer-facing AI requires to work, including vector databases, model monitoring tools, and amortized fine-tuning costs.

Once both are pulled out as their own layers, the AI-Adjusted Gross Profit is the number that matters. Everything else flows from it.

Flat AI subscriptions are under pressure

If you doubt that AI costs are a new variable layer, look at GitHub Copilot.

GitHub announced that Copilot would move to usage-based billing as of June 1, 2026. Why? Because the variance in usage is enormous and the cost gap between users is widening.

Two real Copilot use cases:

|

Light use |

Heavy use |

|

|

Usage |

Single prompt, single response |

Continuous, multi-step coding |

|

Compute |

Lightweight inference |

Long-running, multi-model |

|

Cost |

Cents per interaction |

Dollars to tens of dollars per task |

|

Pricing fit |

Fits inside flat pricing |

Breaks flat-pricing math |

Same subscription price, very different gross-margin outcome. The CFO takeaway: flat subscription pricing hides margin leakage when heavy AI users consume disproportionate compute. Even an AI-native company eventually must break apart the unified seat price.

If GitHub, sitting on Microsoft's infrastructure, has to move, your flat AI subscription is on a clock too.

Source: GitHub Blog, "GitHub Copilot is moving to usage-based billing," April 21, 2026.

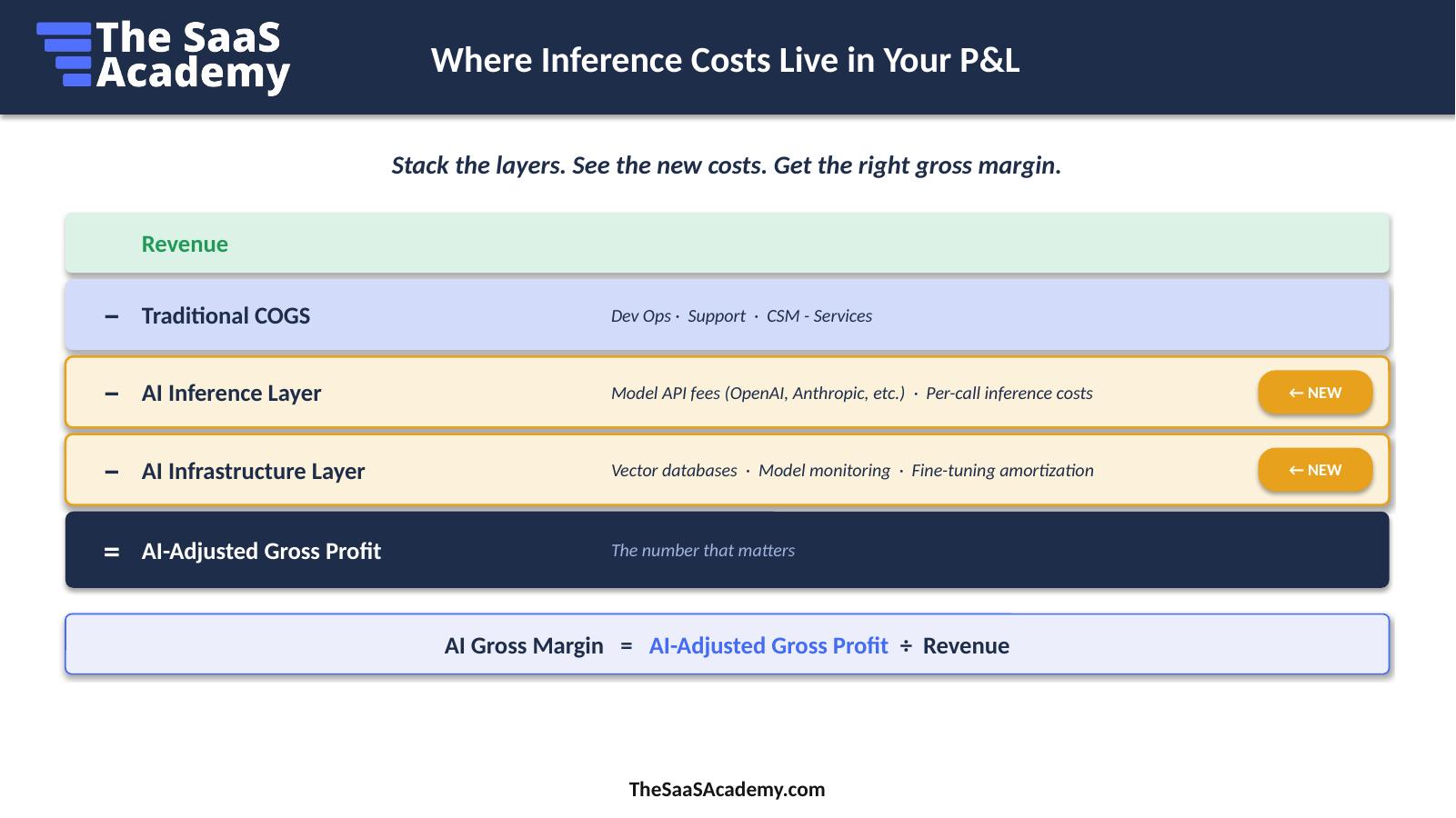

The AI costs are real. They're just hidden.

When you ask a finance team, "What's our AI gross margin?", the most common honest answer is: we don't know yet, because the costs aren't isolated and revenue is not AI-tagged.

Here's where AI costs are usually hiding:

- Cloud and Hosting: a catch-all line item where API fees disappear

- Software subscriptions: API costs mixed with regular SaaS tools

- R&D expense: training and experimentation costs in the wrong bucket

- Infrastructure: vector databases and model monitoring, untagged

Where they should go:

COGS

- Model API fees, such as OpenAI and Anthropic, for customer-facing features.

- Vector databases serving customer data at inference, including RAG and search.

- Model monitoring for production, customer-facing models.

- Fine-tuning amortization.

OpEx

- Internal AI productivity tools.

- Employee-facing copilots for AI-Augmented use.

- Engineering productivity tools.

The pattern: the same cost can be COGS or OpEx. What changes is who triggers it and where it runs. Customer-triggered and customer-facing means COGS. Employee-triggered and internal-only means OpEx or the department they sit in. Costs follow the employee.

If COGS is wrong, gross margin is wrong, and every metric will be wrong. Fix the structure once. Trust the metrics every month.

The five board questions coming your way

Set yourself up to answer these before you're asked:

- What's our AI gross margin, separated from the rest of the business?

- How does inference cost scale as we add more users?

- Are we subsidizing heavy users with flat pricing?

- What's our path back to 70%+ gross margins? Should we target that?

- How does our AI cost structure compare to public company benchmarks?

You don't need a perfect answer to all five next month. You do need a defensible framework for getting there. The rest of this post is that framework.

Your action this month: find where AI costs are hiding

One action. One deliverable. Don't fix it yet. Just find it.

- Pull your current P&L for the last 90 days.

- Open your AI P&L mapping worksheet, or build a simple two-column sheet.

- In Column B, identify where each AI cost is currently sitting. Is it in Hosting? Cloud infrastructure? Software subscriptions? R&D?

- Mark it. That's your baseline.

The deliverable is a single sheet showing where every AI dollar currently lives in your books. It will be ugly. That's the point. You can't fix what you haven't found.

Five takeaways

- AI changes the cost structure. Variable inference is a new layer your traditional COGS model didn't have.

- Know your P&L profile. AI-Augmented, AI-Enabled, and AI-Native have different targets and different treatment.

- Build the AI-layered P&L. Revenue less Traditional COGS, less Inference, less AI Infrastructure, equals AI gross profit.

- AI costs are hiding in plain sight. Cloud, software subscriptions, and R&D are the places to look before they become material.

- Flat subscriptions are under pressure. When usage and cost don't connect, heavy users quietly destroy your coveted margin.

Up next: how to classify AI costs above the line, set up cost tagging in AWS, GCP, and Azure, and build the AI COGS waterfall.

AI Finance Metrics FAQs

What is AI gross margin?

AI gross margin is gross margin calculated specifically for AI-driven revenue, with all customer-facing inference and AI infrastructure costs pulled into COGS. The formula is:

AI Gross Margin = AI Revenue less AI COGS, divided by AI Revenue

It's reported separately from blended gross margin because the cost behavior is fundamentally different. AI inference is variable instead of semi-fixed.

Should inference costs go into COGS or OpEx?

Customer-facing inference belongs in COGS / Dev Ops. Or a new AI cost center. Employee-facing or internal inference, such as engineers using Copilot or finance using AI summarization tools, belongs in OpEx or the department they sit in.

The test: did a customer trigger this API call? If yes, it's COGS. If an employee triggered it for internal productivity, it gets coded to their department. G&A is not the dumping ground for expense.

What is the typical AI gross margin for SaaS in 2026?

Directional ranges: AI-Augmented businesses target 80%, AI-Enabled businesses target 60% to 79%, and AI-Native businesses target 50% to 60%. ICONIQ's State of AI puts the AI-native average around 52% in 2026.

These are starting points, not pass/fail grades. They will move as model costs continue to deflate.

Why are SaaS gross margins falling because of AI?

Because inference is a variable cost. Traditional SaaS COGS scaled gradually with infrastructure and headcount. AI inference scales linearly, and sometimes super-linearly, with every user interaction. That introduces real cost-of-revenue exposure that didn't exist before and compresses margin until pricing models adapt.

How do I find AI costs that are hidden in my P&L?

Pull your last 90 days of cloud, software subscription, R&D, and infrastructure spend. Tag each line for whether it's customer-facing AI, employee-facing AI, or non-AI. You’ll probably need to work with your product team.

Roll the customer-facing AI lines up into a new COGS bucket. Roll employee-facing AI into the applicable departments. That's your baseline. From there, you can set up native tagging in AWS, GCP, or Azure for ongoing visibility.

This is Lesson 1 of the AI Economics for SaaS CFOs course. Previous: The Four Layers of AI Measurement. Next: AI COGS: What Goes Above the Line?

Ben Murray, The SaaS CFO, has worked in finance and accounting for 25+ years. He’s been a SaaS CFO for 10+ years and began his career in the FP&A function. He holds an active Tennessee CPA license and earned his undergraduate degree from the University of Colorado at Boulder and MBA from the University of Iowa. He offers coaching, fractional CFO services, and SaaS finance courses.